What Is Edge AI Robotics and Why Everyone Is Talking About It

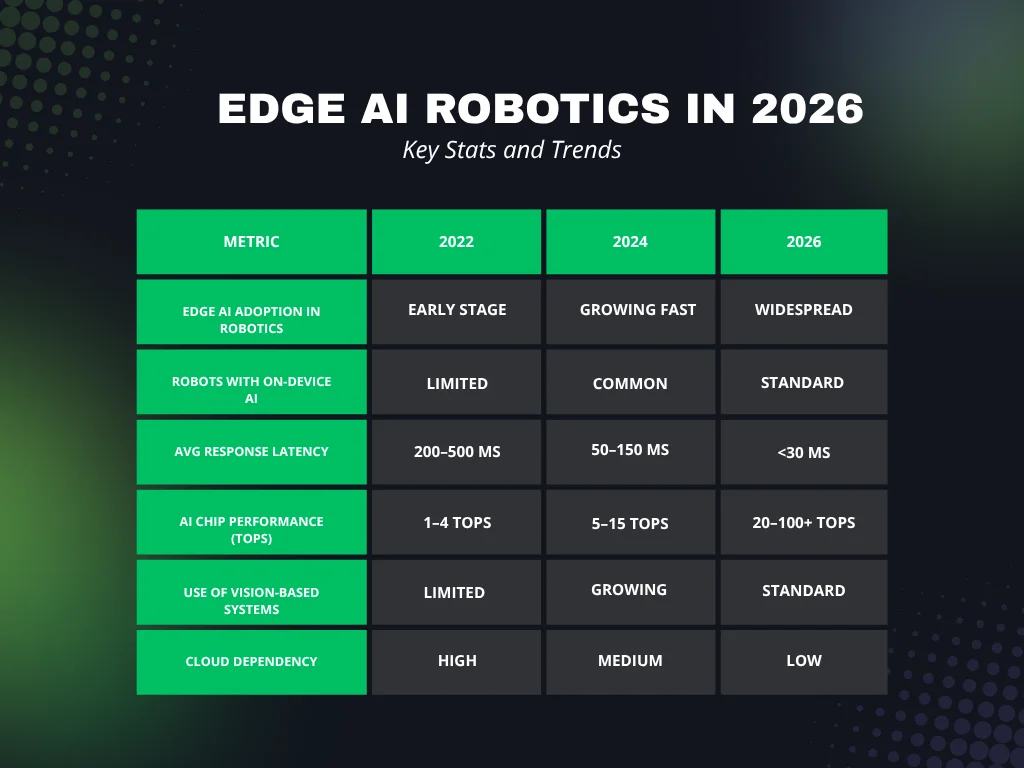

If you have been seeing more edge AI robotics news lately, there is a good reason. This field is moving fast, and now it is no longer just about experiments. Real robots are already working in real places.

Edge AI robotics means robots can think and make decisions directly on the device (So that is cool). They do not need to send data to the cloud and wait for a response. Everything happens locally (very convenient). This makes them faster and more reliable.

Before, many robots depended on cloud systems. They would capture data, send it somewhere, wait, and then act; that works for some tasks, but not for real-time work. If a robot is moving, lifting, or interacting with people, even a small delay can cause problems.

That is why edge AI is becoming the standard. It allows robots to react instantly. It also means they can keep working even if the internet is slow or completely unavailable.

Latest Edge AI Robotics News: Real Industry Updates in 2026

The biggest change in recent news is simple. Robots are now being used in real jobs, not just tested in labs.

One example comes from heavy industry: A U.S. shipbuilding company testing AI robots in production is now using machines that can sand metal, grind surfaces, and inspect parts. These are not easy tasks. The environment is messy and unpredictable, but the robots can handle it because they use AI locally. They can adjust in real time instead of following a fixed script.

Another recent development is robots that can talk and respond to people. At a major tech event, a conversational edge AI robot powered by NVIDIA was shown that can understand speech and reply instantly. The important detail is that it does this on-device. There is no delay from cloud processing. This makes interaction feel natural.

AI Robotics expansion

Big companies are also investing heavily. One well-known example is a large-scale AI robotics expansion in Amazon operations, where over a million robots are already in use. Now they are adding more AI capabilities. This shows that robotics is not just a future idea; it is already core infrastructure.

There are also startups building new systems where robots learn from video. Instead of programming every movement, developers can show a robot how something works. The robot then learns patterns and applies them in real-world situations, marking a major shift in how robots are trained.

Hardware Trends and New AI Chips

Hardware is one of the main reasons this progress is happening. Edge AI chips are getting stronger and more efficient. (For example, DeepX DX-M1/DX-M1M or Rockchip RK3588)

A modern edge AI robotics system often includes a CPU, a GPU, and a small NPU. The CPU handles general tasks. The GPU helps with visual processing. The NPU is designed for AI workloads like image recognition and object detection.

Companies are now building special chips just for robots. Some call them robot brains. For example, growing demand for physical AI chips in robotics is now being reported by major semiconductor companies. This shows that the hardware side is scaling quickly.

Also, Energy efficiency is very important. Many robots run on batteries: If the chip uses too much power, the robot cannot run for long. That is why newer chips focus on doing more work with less energy. This is one of the key points in edge-AI robotics news: better chips mean smarter, cheaper robots.

Real World Use Cases in 2026

Edge AI robotics is already being used in many industries: 1) in factories, robots can now handle objects that are not perfectly placed. They can adjust their movement, recognize different shapes, and continue working even if something changes.

2) In warehouses, robots move goods, scan packages, and avoid obstacles. They use cameras and sensors to understand their environment, process data locally, and react instantly.

3) In healthcare, robots are helping with simple tasks like delivering supplies. Some systems can also monitor patients. Edge AI allows them to process data safely without sending it to external servers.

4) In homes, robots are getting smarter. A robot vacuum is a simple example. Older ones moved randomly. New ones create maps, plan routes, and avoid obstacles like shoes or pets.

Vision Systems and Small AI Models

One strong trend in edge AI robotics is the use of cameras instead of complex sensors. Vision-based systems are becoming more common. Instead of adding expensive hardware, companies use AI to understand what the camera sees. This reduces cost and makes robots more flexible.

Another trend is smaller AI models. Large models are powerful but heavy. They need a lot of memory and energy. So developers are creating lightweight models that can run directly on edge devices.

These models are faster and more efficient. They are not as large, but they are good enough for real tasks.

Edge AI Robotics vs Cloud Robotics Comparison

To better understand the difference, here is a simple comparison.

| Feature | Cloud-Based Robotics | Edge AI Robotics | Hybrid Robotics |

| Processing Location | Remote servers | On-device | Both |

| Response Speed | Slower | Very fast | Medium |

| Internet Dependency | High | Low | Medium |

| Power Usage | Lower device load | Higher device load | Balanced |

| Privacy | Lower | Higher | Medium |

| Flexibility | High with cloud | High locally | Very high |

Edge AI robotics stands out because it is fast and independent. That is why it is growing so quickly.

Software Platforms and Development Tools

Software is also improving. Building AI models is not enough; developers need tools to run them on real devices. Many platforms now help convert AI models into formats that work efficiently on edge hardware. This makes deployment easier.

There are also robotics frameworks that help control movement and behavior; when combined with edge AI, they allow robots to act in real time.

And, of course, open-source projects are growing as well. Developers can share tools, models, and ideas, which helps speed up innovation.

Autonomous Robots, Physical AI, and New Capabilities

A new term you will see in edge AI robotics news is physical AI. This simply means AI that works in the real world, inside machines. Robots are no longer just following instructions; they are starting to understand their environment.

Autonomous robots, such as delivery bots and drones, are a good example – they need to move, avoid obstacles, and make decisions constantly; Edge AI allows them to do this without delay.

Safety is also improving. If a robot detects something dangerous, it can react instantly, which is important when robots work near people.

Another big change is how robots are trained. For example, many are now trained using simulation first – developers create virtual environments where robots can learn safely. After that, the robots are moved to real-world tasks. This makes development faster and cheaper.

Challenges in Edge AI Robotics and What Comes Next

Even with all this progress, there are still challenges – power consumption is one of them. Running AI locally uses energy, so developers need to keep improving efficiency. Heat is another issue: small devices can overheat when running complex models.

Data is also important. Robots need good data to learn. Collecting and preparing this data takes time; there is also a need for standard tools. Different systems should work together more easily. But overall, the direction is clear: edge AI robotics is growing and becoming more practical every year.

FAQ Section

What is edge AI robotics in simple words?

It means robots can think and make decisions directly on the device without using the cloud.

Why is edge AI better for robots?

It makes them faster and more reliable because they do not need to wait for internet responses.

Where is edge AI robotics used today?

Factories, warehouses, healthcare, and home devices.

What is physical AI?

It is AI that works in real machines and interacts with the physical world.

Are robots replacing people?

Not really. They are mostly helping with repetitive or dangerous tasks.

Conclusion

The latest news shows a clear shift. Robots are becoming faster, smarter, and more independent. They can now work in real environments, handle complex tasks, and even interact with people.

This is happening because of better hardware, smaller AI models, and smarter software tools. Edge AI is moving intelligence from the cloud into real machines. We are now at a point where robots are not just tools; they are systems that can see, understand, and act in real time.

This is just the beginning. As technology improves, Edge AI robotics will become a normal part of everyday life; we see the future coming faster than we expected.